Introducing Confluent Platform 5.5

We are pleased to announce the release of Confluent Platform 5.5. With this release, Confluent makes event streaming more broadly accessible to developers of all backgrounds, enhancing three categories of features that complement Apache Kafka® and make it easier to build event streaming applications:

- First, we are adding support for Protobuf and JSON schemas to Confluent Schema Registry and throughout our entire platform, further expanding our rich, pre-built ecosystem for Kafka

- Next, we are introducing exactly-once semantics for our librdkafka-based clients and admin functions in preview state for Confluent REST Proxy, enhancing our tooling for multi-language development with Kafka

- Finally, we are continuing to invest in an expansive feature set for ksqlDB, the event streaming database that makes it simple to build event streaming applications

All of these features are free to download, modify, use, and redistribute. Librdkafka-based clients are open source under the Apache 2.0 license, while Schema Registry, REST Proxy, and ksqlDB are available under the Confluent Community License.

As with any Confluent Platform release, there are a host of details in the release notes, but this blog post provides a high-level overview of what’s included.

Download Confluent Platform 5.5

Rich, pre-built ecosystem

Confluent provides a rich, pre-built ecosystem for Kafka so you can spend the majority of your time building event streaming applications that will change your business and less time figuring out the inner workings of Kafka. One of our most popular features within the ecosystem is Confluent Schema Registry, which makes it easier for you to manage the changing definitions of the events you write to topics. This becomes more and more important as the complexity of your application grows, and multiple producers and consumers share the same topics and all experience their own release cycle. Enforcing schema agreements between clients is key to succeeding with any non-trivial system built on Kafka.

Confluent Platform expands the Kafka ecosystem with Protobuf and JSON support

Confluent Schema Registry previously supported one data format: Apache Avro™. With the release of Confluent Platform 5.5, we’re adding support for Protobuf and JSON to Schema Registry, providing you with the same assurances of data compatibility and consistency for two highly requested formats. Schema Registry also provides compatibility rules to both formats, allowing schemas to evolve as your data changes without impacting consumer applications. By expanding support to Protobuf and JSON schemas, Confluent Platform now offers you more choices of serialization format.

Confluent Platform 5.5 also includes support for Protobuf and JSON throughout its other components. Kafka Connect, Kafka Streams, ksqlDB, REST Proxy, and Confluent Control Center can all now register and retrieve Protobuf and JSON schemas from Schema Registry. Additionally, Schema Validation, an exciting new commercial feature that we introduced in Confluent Platform 5.4, now provides the platform with a programmatic way of enforcing schema compatibility at the broker level. This ensures that every single message inbound to a particular topic is validated for key and value schema compliance with the corresponding policy in Schema Registry—even if those messages are in Protobuf or JSON format.

Protobuf and JSON were the two most requested data formats for Schema Registry support, but if you want to connect applications to Kafka using other formats, such as XML, Thrift, or Apache Parquet, we’ve also added support for customizable plug-in schemas. You can now define your own schemas for Schema Registry, allowing for greater data compatibility within Kafka topics irrespective of the underlying format.

Multi-language development

Confluent provides the tools you need to connect any application to Kafka, regardless of your preferred programming language. This tooling includes our librdkafka-based clients for C/C++, Python, Go, and .NET, along with a RESTful interface to Kafka through Confluent REST Proxy. For even greater flexibility when developing event streaming applications and to achieve greater parity with the native Java client, we are continuing to invest in librdkafka and REST Proxy.

Enhanced multi-language development with exactly-once semantics for librdkafka-based clients

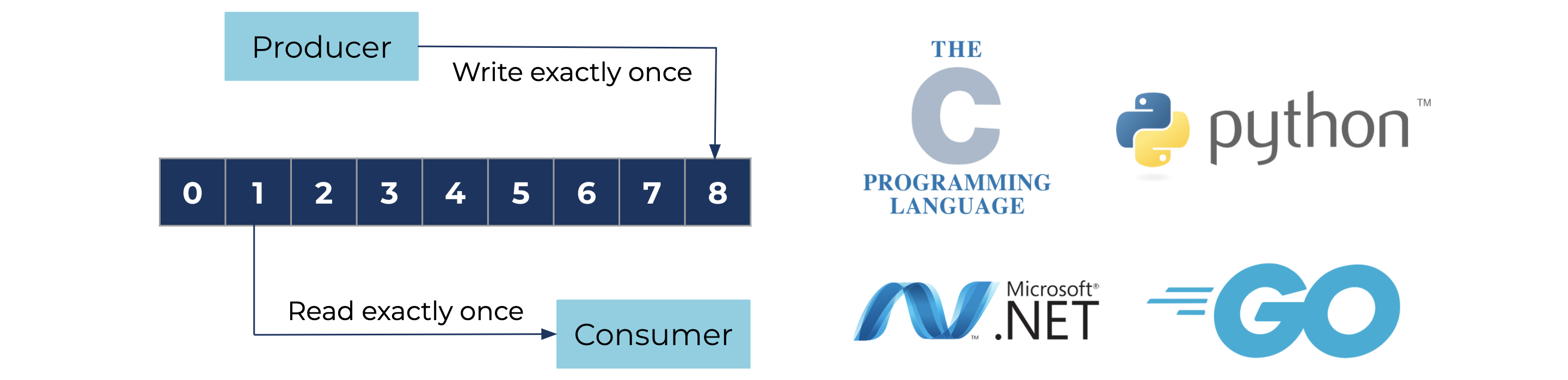

Exactly-once semantics allows an event streaming application to read and write to a set of topics transactionally. This allows the application to ensure that any given message is processed one time and only one time. When sending and receiving critical messages to and from Kafka, it is often vital that a message is neither skipped nor repeated, particularly if that message instigates some action from your business. Exactly-once semantics makes this possible.

Confluent Platform 5.5 introduces exactly-once semantics to the librdkafka-based clients by adding support for the idempotent producer and transactions. This means applications written in C/C++, Python, Go, or .NET can now guarantee that messages processed by a given event streaming application will be processed exactly once. With a broader range of Kafka clients offering exactly-once semantics, you now can work in programming languages outside of Java without running the risk of processing duplicate messages.

REST Proxy introduces admin functions in preview to improve Kafka connectivity

In addition to the non-Java clients, Confluent provides the REST Proxy for HTTP-connected applications without a supported client to connect to a Kafka cluster. This also gives you more choices on how to connect to Kafka. Many have leveraged this Confluent Platform component because of the simplicity and language-agnostic nature of REST APIs.

Traditionally, the REST Proxy was useful for producing and consuming messages, but administrative operations had to be done by shelling out to command line tools, using Confluent Control Center, or using the Java AdminClient class. As part of our effort to provide the same experience across REST Proxy and the Confluent clients, Confluent Platform 5.5 adds several admin functions to the product in preview. This includes:

- List and describe brokers

- Create, delete, list, and describe topics

- List and describe configurations for topics

With these new functions, you can produce and consume messages and perform programmatic administrative functions directly from Confluent REST Proxy, providing greater flexibility on how you choose to connect event streaming applications to a Kafka cluster.

As with any preview feature, admin functions in REST Proxy are not yet supported for production environments, since we could make changes to APIs before reaching general availability with this feature.

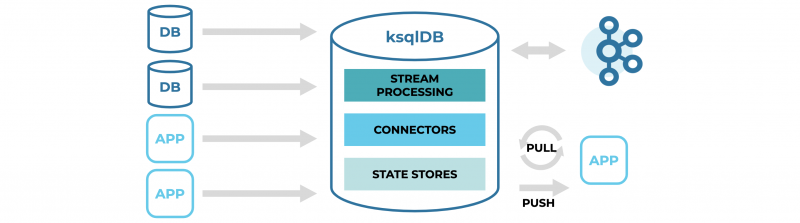

Event streaming database

In November 2019, we launched ksqlDB, an event streaming database that is quickly becoming the tool of choice for creating event streaming applications. To continue providing the functionality and simplicity needed to effectively build these modern applications, we’ve added a number of new features. We’ve also further integrated the product into Confluent Control Center for better visibility into ksqlDB applications.

ksqlDB 0.7 further simplifies the development of event streaming applications

Confluent Platform 5.5 includes ksqlDB 0.7, which made several key improvements to the event streaming database:

- ksqlDB now includes a COUNT_DISTINCT aggregate function, a highly requested feature that returns a count of the distinct occurrences of a value within a window of a stream.

- In the past, ksqlDB was only able to work with record keys that were of type string. ksqlDB 0.7 removes this limitation, so keys can now be of other primitive types, including INT, BIGINT, and DOUBLE.

- ksqlDB previously introduced functionality for serving queries on tables of state, which we call “pull queries.” ksqlDB has improved the availability of pull queries by rerouting queries to replica table partitions if the leader partition is down. Note: pull queries are still only available in preview state, meaning they are not yet supported in production environments.

For more details about ksqlDB 0.7, please read the release announcement by Vicky Papavasileiou.

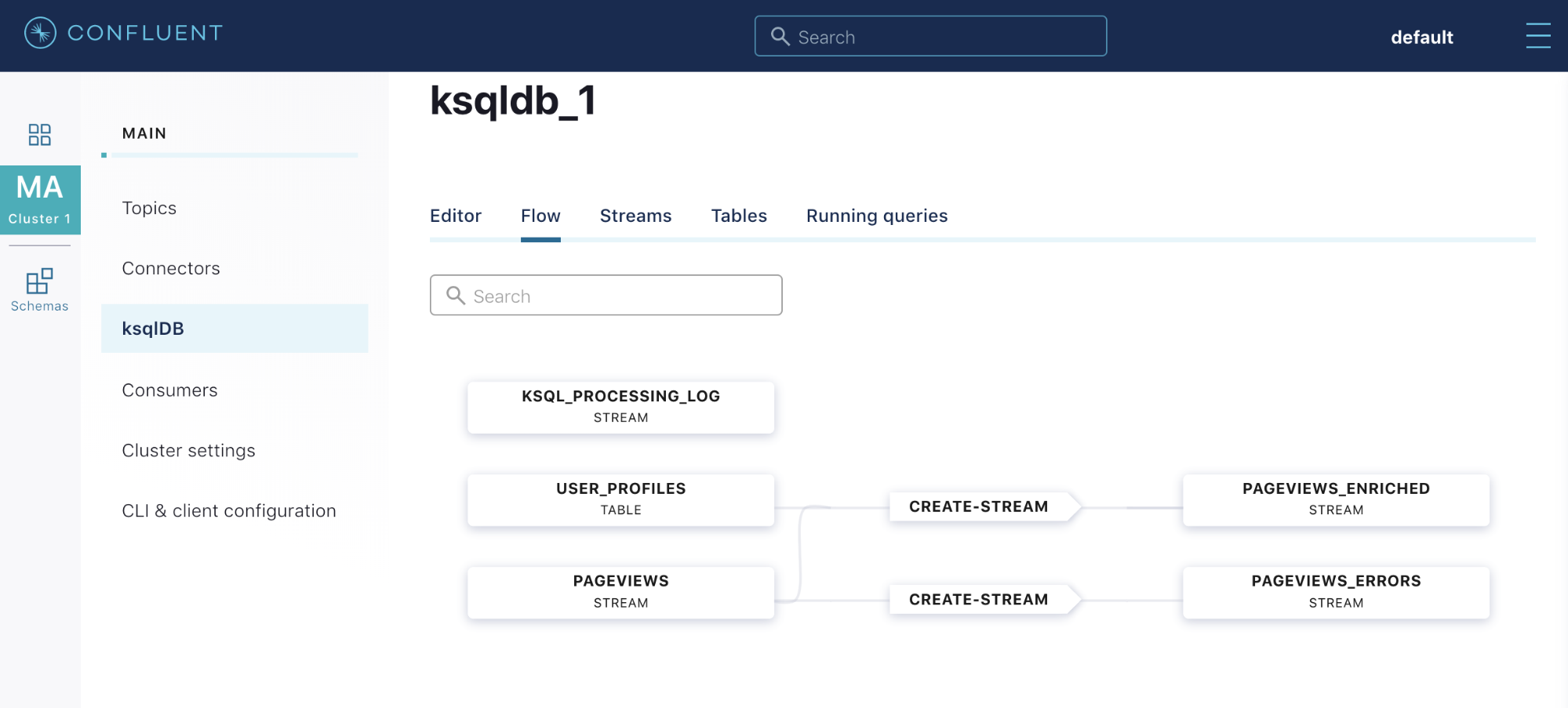

Confluent Control Center provides greater visibility and discovery of ksqlDB applications

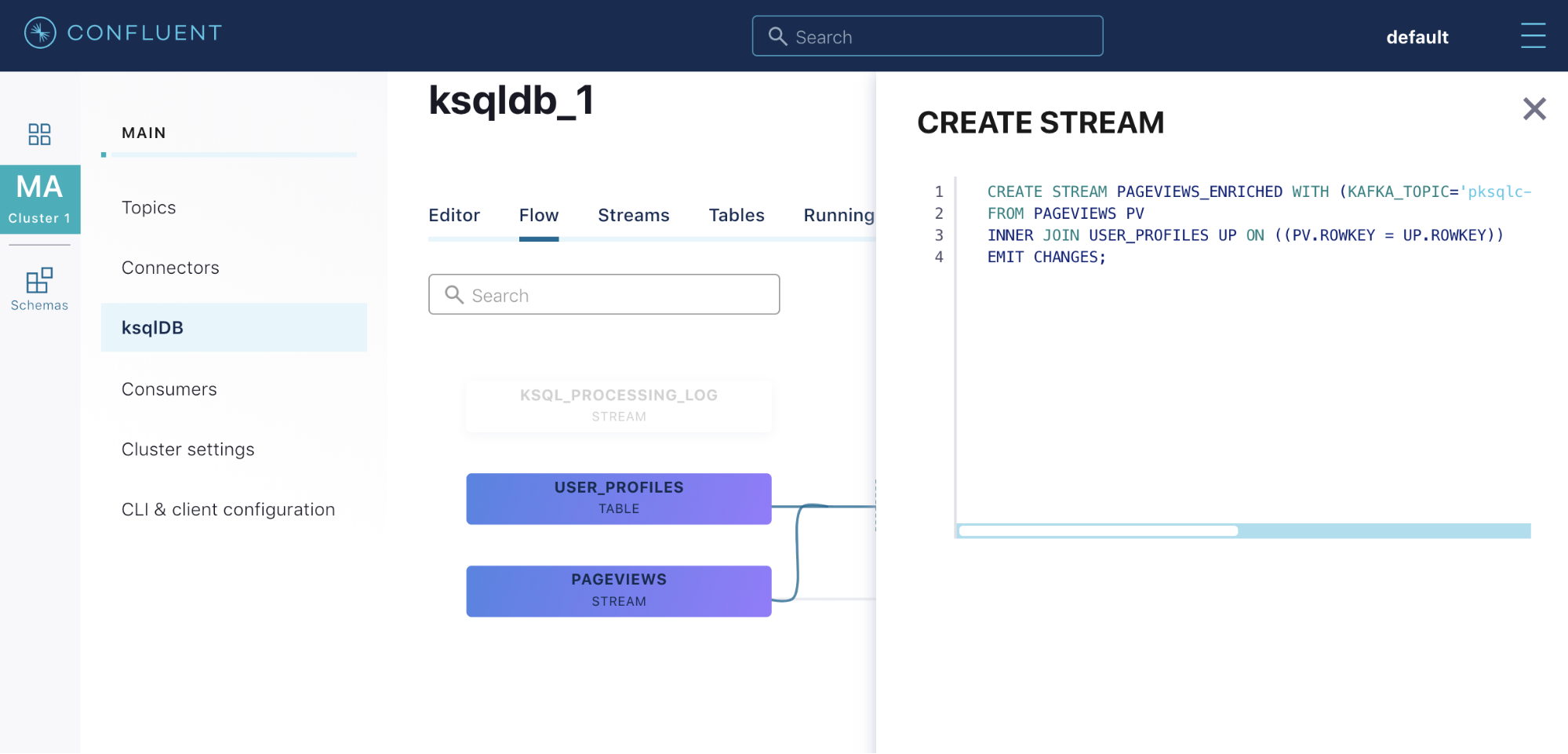

To further simplify the process of building event streaming applications, you need a way to quickly understand ksqlDB applications at a high level rather than digging through a long list of ksqlDB queries one at a time. With Confluent Platform 5.5, Control Center adds the ksqlDB Flow View to accomplish this goal and to continue providing a centralized user interface for all components of Confluent Platform.

After building a series of queries, the ksqlDB Flow View allows you to visualize which tables and streams are being used as inputs, what types of queries are being run on those inputs, and the resulting table and stream outputs.

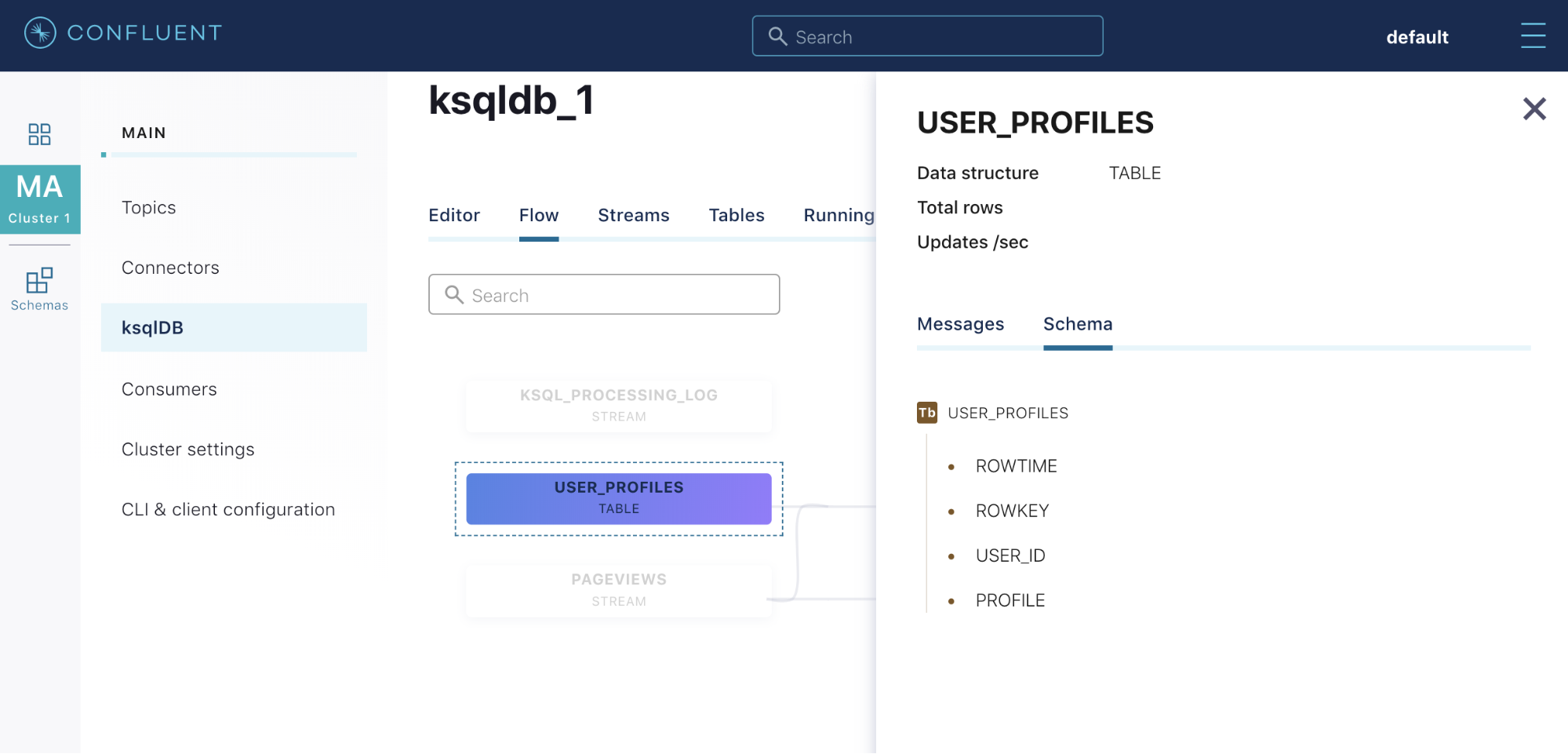

It also allows you to drill down into any of the tables or streams to quickly understand their schema and see what messages are passing through them.

The same functionality is available for nodes representing ksqlDB queries. Drilling down into any of the query nodes provides the associated code so you can quickly understand how the query’s input tables and streams are being manipulated.

Note: while ksqlDB is available under the Confluent Community License, Control Center is a commercially licensed feature of Confluent Platform.

Support for Apache Kafka 2.5

Following the standard for every Confluent release, Confluent Platform 5.5 is built on the most recent version of Kafka—in this case, version 2.5. Kafka 2.5 adds several new features. Here are some of the highlights:

- Progress on KIP-500: Apache ZooKeeper™ removal – ZooKeeper removal was announced in KIP-500, but the delivery will span multiple Kafka releases. For Apache Kafka 2.5, some APIs have been updated to remove ZooKeeper references. KIP-555 details the deprecation process for ZooKeeper in the context of admin tools, and KIP-543 removes the need for ZooKeeper access for dynamic configs.

- KIP-515: Enable ZK client to use the new TLS supported authentication – Before Apache Kafka 2.5, ZooKeeper supported TLS encryption, but there was no secure mechanism for passing the necessary configuration values. KIP-515 enables the use of secure configuration values for using TLS with ZooKeeper, greatly improving the security of communication between brokers and ZooKeeper nodes.

- KIP-558: Track a connector’s active topics – Before Apache Kafka 2.5, it was not easy to know which topics a connector wrote to or read from, particularly when sink connectors used regex for topic selection. Now, Kafka adds functionality that provides an explicit list of a connector’s active topics.

For more details about Apache Kafka 2.5, please read the blog post by David Arthur

Interested in more?

Check out Tim Berglund’s video summary below for an overview of what’s new in Confluent Platform 5.5 and download Confluent Platform 5.5 to get started with a complete event streaming platform built by the original creators of Apache Kafka.

このブログ記事は気に入りましたか?今すぐ共有

Confluent ブログの登録

New with Confluent Platform 7.9: Oracle XStream CDC Connector, Client-Side Field Level Encryption (EA), Confluent for VS Code, and More

This blog announces the general availability of Confluent Platform 7.9 and its latest key features: Oracle XStream CDC Connector, Client-Side Field Level Encryption (EA), Confluent for VS Code, and more.

Meet the Oracle XStream CDC Source Connector

Confluent's new Oracle XStream CDC Premium Connector delivers enterprise-grade performance with 2-3x throughput improvement over traditional approaches, eliminates costly Oracle GoldenGate licensing requirements, and seamlessly integrates with 120+ connectors...