Technology

Data Products, Data Contracts, and Change Data Capture

Change data capture is a popular method to connect database tables to data streams, but it comes with drawbacks. The next evolution of the CDC pattern, first-class data products, provide resilient pipelines that support both real-time and batch processing while isolating upstream systems...

Unlock Cost Savings with Freight Clusters–Now in General Availability

Confluent Cloud Freight clusters are now Generally Available on AWS. In this blog, learn how Freight clusters can save you up to 90% at GBps+ scale.

Contributing to Apache Kafka®: How to Write a KIP

Learn how to contribute to open source Apache Kafka by writing Kafka Improvement Proposals (KIPs) that solve problems and add features! Read on for real examples.

Protect Your Data With Self-Managed Keys (BYOK) Enhancements

Discover how Confluent’s BYOK for Enterprise Clusters and support for EKMs enhance security, and efficient data streaming in our latest blog.

Flink AI: Hands-On FEDERATED_SEARCH()—Search a Vector Database with Confluent Cloud for Apache Flink®

Introduction to Flink SQL FEDERATED_SEARCH() on Confluent cloud. FEDERATED_SEARCH() along with ML_PREDICT() enables developers to execute GenAI use cases with data streaming technologies.

Cluster Linking for Azure Private Link is Now Available in Confluent Cloud

Confluent Cloud now supports Cluster Linking for Azure Private Link, enabling secure data replication between Kafka clusters in private Azure environments. With Cluster Linking, organizations can achieve real-time data movement, disaster recovery, migrations, and secure data sharing

Your AI Project Has a Data Liberation Problem

Most AI projects fail not because of bad models, but because of bad data. Siloed, stale, and locked in batch pipelines, enterprise data isn’t AI-ready. This post breaks down the data liberation problem and how streaming solves it—freeing real-time data so AI can actually deliver value.

Managing Data Contracts: Helping Developers Codify “Shift Left”

The concept of “shift left” in building data pipelines involves applying stream governance close to the source of events. Let’s discuss some tools (like Terraform and Gradle) and practices used by data streaming engineers to build and maintain those data contracts.

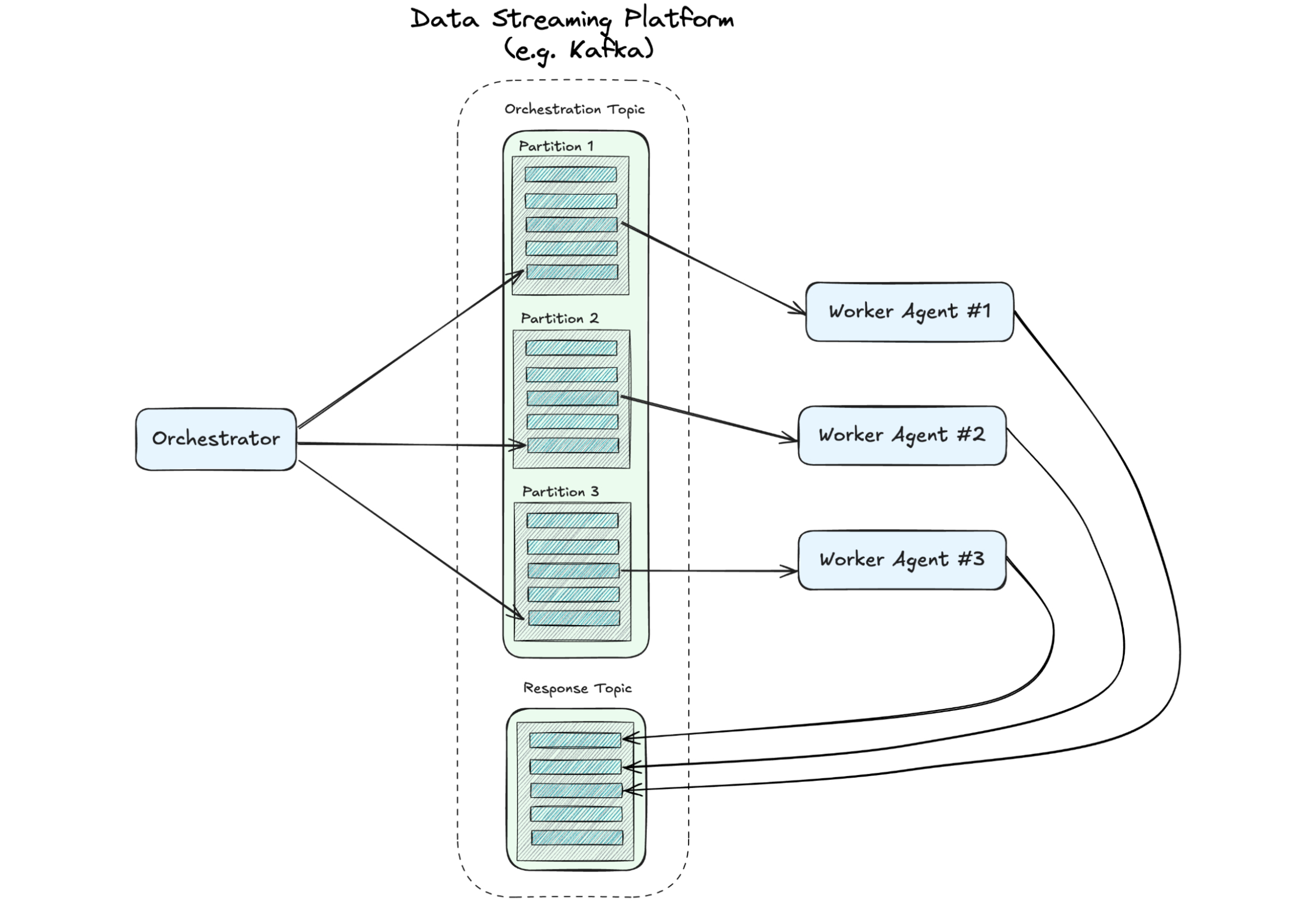

A Distributed State of Mind: Event-Driven Multi-Agent Systems

This article explores how event-driven design—a proven approach in microservices—can address the chaos, creating scalable, efficient multi-agent systems. If you’re leading teams toward the future of AI, understanding these patterns is critical. We’ll demonstrate how they can be implemented.

Using Apache Flink® for Model Inference: A Guide for Real-Time AI Applications

Learn how Flink enables developers to connect real-time data to external models through remote inference, enabling seamless coordination between data processing and AI/ML workflows.

Building High Throughput Apache Kafka Applications with Confluent and Provisioned Mode for AWS Lambda Event Source Mapping (ESM)

Learn how to use the recently launched Provisioned Mode for Lambda’s Kafka ESM to build high throughput Kafka applications with Confluent Cloud’s Kafka platform. This blog also exhibits a sample scenario to activate and test the Provisioned Mode for ESM, and outline best practices.

Automating Podcast Promotion with AI and Event-Driven Design

We built an AI-powered tool to automate LinkedIn post creation for podcasts, using Kafka, Flink, and OpenAI models. With an event-driven design, it’s scalable, modular, and future-proof. Learn how this system works and explore the code on GitHub in our latest blog.

Revolutionizing Failure Management in Apache Flink: Meet FLIP-304's Pluggable Failure Enrichers

FLIP 304 lets you customize and enrich your Flink failure messaging: Assign types to failures, emit custom metrics per type, and expose your failure data to other tools.

Agentic AI: The Top 5 Challenges and How to Overcome Them

Before deploying agentic AI, enterprises should be prepared to address several issues that could impact the trustworthiness and security of the system.

Optimize SaaS Integration with Fully Managed HTTP Connectors V2 for Confluent Cloud

Learn how an e-commerce company integrates the data from its Stripe system with the Pinecone vector database using the new fully managed HTTP Source V2 and HTTP Sink V2 Connectors along with Flink AI model inference in Confluent Cloud to enhance its real-time fraud detection.

Unlock Cost Savings with Freight Clusters–Now in General Availability

Confluent Cloud Freight clusters are now Generally Available on AWS. In this blog, learn how Freight clusters can save you up to 90% at GBps+ scale.

New Security Tools to Protect Your New Year’s Resolutions

Confluent's advanced security and connectivity features allow you to protect your data and innovate confidently. Features like Mutual TLS (mTLS), Private Link for Schema Registry, and Private Link for Flink, not only bolster security but also streamline network architecture and improve performance.