[Webinar] AI-Powered Innovation with Confluent & Microsoft Azure | Register Now

Apache Kafka Security 101

TLS, Kerberos, SASL, and Authorizer in Apache Kafka 0.9 – Enabling New Encryption, Authorization, and Authentication Features

Apache Kafka is frequently used to store critical data making it one of the most important components of a company’s data infrastructure. Our goal is to make it possible to run Kafka as a central platform for streaming data, supporting anything from a single app to a whole company. Multi-tenancy is an essential requirement in achieving this vision and, in turn, security features are crucial for multi-tenancy.

Previous to 0.9, Kafka had no built-in security features. One could lock down access at the network level but this is not viable for a big shared multi-tenant cluster being used across a large company. Consequently securing Kafka has been one of the most requested features. Security is of particular importance in today’s world where cyber-attacks are a common occurrence and the threat of data breaches is a reality for businesses of all sizes, and at all levels from individual users to whole government entities.

Four key security features were added in Apache Kafka 0.9, which is included in the Confluent Platform 2.0:

-

Administrators can require client authentication using either Kerberos or Transport Layer Security (TLS) client certificates, so that Kafka brokers know who is making each request

-

A Unix-like permissions system can be used to control which users can access which data.

-

Network communication can be encrypted, allowing messages to be securely sent across untrusted networks.

-

Administrators can require authentication for communication between Kafka brokers and ZooKeeper.

In this post, we will discuss how to secure Kafka using these features. For simplicity, we will assume a brand new cluster; the Confluent documentation describes how to enable security features on a running Kafka cluster. With regards to clients, we will focus on the console and Java clients (a future blog post will cover librdkafka, the C client we maintain).

It’s worth noting that the security features were implemented in a backwards-compatible manner and are disabled by default. In addition, only the new Java clients (and librdkafka) have been augmented with support for security. For the most part, enabling security is simply a matter of configuration and no code changes are required.

Defining the Solution

There are a number of different ways to secure a Kafka cluster depending on one’s requirements. In this post, we will show one possible approach, but Confluent’s Kafka Security documentation describes the various options in more detail.

For client/broker and inter-broker communication, we will:

- Require TLS or Kerberos authentication

- Encrypt network traffic via TLS

- Perform authorization via access control lists (ACLs)

For broker/ZooKeeper communication, we will only require Kerberos authentication as TLS is only supported in ZooKeeper 3.5, which is still at the alpha release stage.

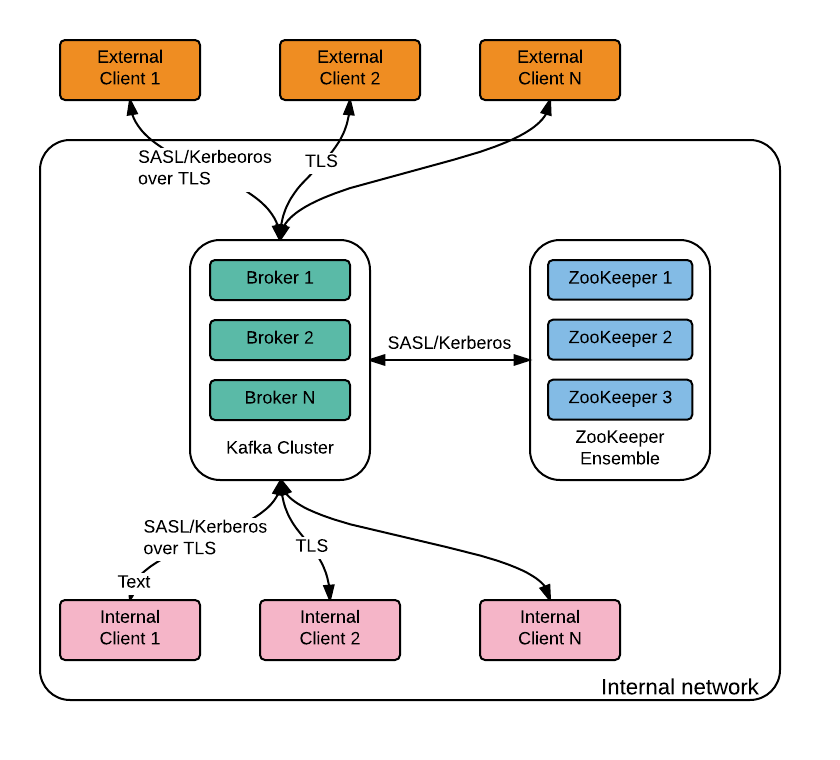

Network segmentation should be used to restrict access to ZooKeeper. Depending on performance and security requirements, Kafka brokers could be accessible internally, exposed to the public internet or via a proxy (in some environments, public internet traffic must go through two separate security stacks in order to make it harder for attackers to exploit bugs in a particular security stack). A simple example can be seen in the following diagram: Before we start

Before we start

First a note on terminology. Secure Sockets Layer (SSL) is the predecessor of TLS and it has been deprecated since June 2015. However, for historical reasons, Kafka (like Java) uses the term SSL instead of TLS in configuration and code, which can be a bit confusing. We will stick to TLS in this document.

Before we start, we need to generate the TLS keys and certificates, create the Kerberos principals and potentially configure the Java Development Kit (JDK) so that it supports stronger encryption algorithms.

TLS Keys and Certificates

We need to generate a key and certificate for each broker and client in the cluster. The common name (CN) of the broker certificate must match the fully qualified domain name (FQDN) of the server as the client compares the CN with the DNS domain name to ensure that it is connecting to the desired broker (instead of a malicious one).

At this point, each broker has a public-private key pair and an unsigned certificate to identify itself. To prevent forged certificates, it is important for each certificate to be signed by a certificate authority (CA). As long as the CA is a genuine and trusted authority, the clients have high assurance that they are connecting to authentic brokers.

In contrast to the keystore, which stores each application’s identity, the truststore stores all the certificates that the application should trust. Importing a certificate into one’s truststore also means trusting all certificates that are signed by that certificate. This attribute is called the chain of trust, and it is particularly useful when deploying TLS on a large Kafka cluster. You can sign all certificates in the cluster with a single CA, and have all machines share the same truststore that contains the CA certificate. That way all machines can authenticate all other machines. A slightly more complex alternative is to use two CAs, one to sign brokers’ keys and another to sign clients’ keys.

For this exercise, we will generate our own CA, which is simply a public-private key pair and certificate and we will add the same CA certificate to each client and broker’s truststore.

The following bash script generates the keystore and truststore for brokers (kafka.server.keystore.jks and kafka.server.truststore.jks) and clients (kafka.client.keystore.jks and kafka.client.truststore.jks):

#!/bin/bash

PASSWORD=test1234

VALIDITY=365

keytool -keystore kafka.server.keystore.jks -alias localhost -validity $VALIDITY -genkey

openssl req -new -x509 -keyout ca-key -out ca-cert -days $VALIDITY

keytool -keystore kafka.server.truststore.jks -alias CARoot -import -file ca-cert

keytool -keystore kafka.client.truststore.jks -alias CARoot -import -file ca-cert

keytool -keystore kafka.server.keystore.jks -alias localhost -certreq -file cert-file

openssl x509 -req -CA ca-cert -CAkey ca-key -in cert-file -out cert-signed -days $VALIDITY -CAcreateserial -passin pass:$PASSWORD

keytool -keystore kafka.server.keystore.jks -alias CARoot -import -file ca-cert

keytool -keystore kafka.server.keystore.jks -alias localhost -import -file cert-signed

keytool -keystore kafka.client.keystore.jks -alias localhost -validity $VALIDITY -genkey

keytool -keystore kafka.client.keystore.jks -alias localhost -certreq -file cert-file

openssl x509 -req -CA ca-cert -CAkey ca-key -in cert-file -out cert-signed -days $VALIDITY -CAcreateserial -passin pass:$PASSWORD

keytool -keystore kafka.client.keystore.jks -alias CARoot -import -file ca-cert

keytool -keystore kafka.client.keystore.jks -alias localhost -import -file cert-signed

Kerberos

If your organization is already using a Kerberos server, it can also be used for Kafka. Otherwise you will need to install one. Your Linux vendor likely has packages for Kerberos and a guide on how to install and configure it (e.g. Ubuntu, Redhat).

If you are using the organization’s Kerberos server, ask your Kerberos administrator for a principal for each Kafka broker in your cluster and for every operating system user that will access Kafka with Kerberos authentication (via clients or tools).

If you have installed your own Kerberos, you will need to create these principals yourself using the following commands:

sudo /usr/sbin/kadmin.local -q ‘addprinc -randkey kafka/{hostname}@{REALM}’

sudo /usr/sbin/kadmin.local -q “ktadd -k /etc/security/keytabs/{keytabname}.keytab kafka/{hostname}@{REALM}”

It is a Kerberos requirement that all your hosts can be resolved by their FQDNs.

Stronger Encryption

Due to import regulations in some countries, the Oracle implementation of Java limits the strength of cryptographic algorithms available by default. If stronger algorithms are needed (for example, AES with 256-bit keys), the JCE Unlimited Strength Jurisdiction Policy Files must be obtained and installed in the JDK/JRE. This affects both TLS and SASL/Kerberos, see the JCA Providers Documentation for more information.

Configuring the ZooKeeper ensemble

The ZooKeeper server configuration is relatively straightforward. We enable Kerberos authentication via the Simple Authentication and Security Layer (SASL). In order to do that, we set the authentication provider, require sasl authentication and configure the login renewal period in zookeeper.properties:

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

requireClientAuthScheme=sasl

jaasLoginRenew=3600000

We also need to configure Kerberos via the zookeeper_jaas.conf file as follows:

Server {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab=”/path/to/server/keytab”

storeKey=true

useTicketCache=false

principal=”zookeeper/yourzkhostname”;

};

Finally, we need to pass the path to the JAAS file when starting the ZooKeeper server:

-Djava.security.auth.login.config=/path/to/server/jaas/file.conf

Configuring Kafka brokers

We start by configuring the desired security protocols and ports in server.properties:

listeners=SSL://:9093,SASL_SSL://:9094

We have not enabled an unsecured (PLAINTEXT) port as we want to ensure that all broker/client and inter-broker network communication is encrypted. We choose SSL as the security protocol for inter-broker communication (SASL_SSL is the other possible option given the configured listeners):

security.inter.broker.protocol=SSL

We know that it is difficult to simultaneously upgrade all systems to the new secure clients, so we allow administrators to support a mix of secure and unsecured clients. This can be done by adding a PLAINTEXT port to listeners, but care has to be taken to restrict access to this port to trusted clients only. Network segmentation and/or authorization ACLs can be used to restrict access to trusted IPs in such cases. We will not cover this in more detail as we will not enable a PLAINTEXT port in our example.

We’ll now go over protocol-specific configuration settings.

TLS

We require TLS client authentication and configure key, keystore and truststore details:

ssl.client.auth=required

ssl.keystore.location=/var/private/ssl/kafka.server.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

ssl.truststore.location=/var/private/ssl/kafka.server.truststore.jks

ssl.truststore.password=test1234

Since we are storing passwords in the broker config, it is important to restrict access via filesystem permissions.

SASL/Kerberos

We will enable SASL/Kerberos for broker/client and broker/ZooKeeper communication.

Most of the configuration for SASL lives in JAAS configuration files containing a KafkaServer section for authentication between client and broker and a Client section for authentication between broker and zookeeper:

KafkaServer {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab=”/etc/security/keytabs/kafka_server.keytab”

principal=”kafka/kafka1.hostname.com@EXAMPLE.COM”;

};

// Zookeeper client authentication

Client {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab=”/etc/security/keytabs/kafka_server.keytab”

principal=”kafka/kafka1.hostname.com@EXAMPLE.COM”;

};

Note that each broker should have its own keytab and the same primary name should be used across all brokers. In the above example, the principal is kafka/kafka1.hostname.com@EXAMPLE.com and the primary name is kafka. The keytabs configured in the JAAS file must be readable by the operating system user who is starting the Kafka broker.

We must also configure the service name in server.properties with the primary name of the Kafka brokers:

sasl.kerberos.service.name=kafka

When it comes to ZooKeeper authentication, if we set the configuration property zookeeper.set.acl in each broker to true, the metadata stored in ZooKeeper is such that only brokers will be able to modify the corresponding znodes, but znodes are world readable. The rationale behind this decision is that the data stored in ZooKeeper is not sensitive, but inappropriate manipulation of znodes can cause cluster disruption.

Authorization and ACLs

Kafka ships with a pluggable Authorizer and an out-of-box authorizer implementation that uses ZooKeeper to store all the ACLs.

Kafka ACLs are defined in the general format of “Principal P is [Allowed/Denied] Operation O From Host H On Resource R”. The operations available are both for clients (producers, consumers, admin) and inter-broker operations of a cluster. In a secure cluster, both client requests and inter-broker operations require authorization.

We enable the default authorizer by setting the following in server.properties:

authorizer.class.name=kafka.security.auth.SimpleAclAuthorizer

The default behavior is such that if a resource has no associated ACLs, then no one is allowed to access the resource, except super users. Setting broker principals as super users is a convenient way to give them the required access to perform inter-broker operations:

super.users=User:Bob;User:Alice

By default, the TLS user name will be of the form “CN=host1.example.com,OU=,O=Confluent,L=London,ST=London,C=GB”. One can change that by setting a customized PrincipalBuilder in server.properties like the following:

principal.builder.class=CustomizedPrincipalBuilderClass

By default, the SASL user name will be the primary part of the Kerberos principal. One can change that by setting sasl.kerberos.principal.to.local.rules to a customized rule in server.properties.

We can use kafka-acls (the Kafka Authorizer CLI) to add, remove or list ACLs. Please run kafka-acls –help for detailed information on the supported options.

The most common use cases for ACL management are adding/removing a principal as a producer or consumer and there are convenience options to handle these cases. In order to add User:Bob as a producer of Test-topic we can execute the following:

kafka-acls –authorizer-properties zookeeper.connect=localhost:2181 \

–add –allow-principal User:Bob \

–producer –topic Test-topic

Similarly to add Alice as a consumer of Test-topic with consumer group Group-1 we specify the –consumer and –group options:

kafka-acls –authorizer-properties zookeeper.connect=localhost:2181 \

–add –allow-principal User:Bob \

–consumer –topic test-topic –group Group-1

Configuring Kafka Clients

TLS is only supported by new Kafka Producer and Consumer, the older APIs are not supported. Enabling security is simply a matter of configuration, no code changes are required.

TLS

The configs for TLS will be the same for both producer and consumer. We have to set the desired security protocol as well as the truststore and keystore information since we are using mutual authentication:

security.protocol=SSL

ssl.truststore.location=/var/private/ssl/kafka.client.truststore.jks

ssl.truststore.password=test1234

ssl.keystore.location=/var/private/ssl/kafka.client.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

Given that passwords are being stored in the client config, it is important to restrict access to the file via filesystem permissions.

SASL/Kerberos

Clients (producers, consumers, connect workers, etc) will authenticate to the cluster with their own principal (usually with the same name as the user running the client), so we need to obtain or create these principals as needed. Then we create a JAAS file for each principal. The KafkaClient section describes how the clients like producer and consumer can connect to the Kafka Broker. The following is an example configuration for a client using a keytab (recommended for long-running processes):

KafkaClient {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab=”/etc/security/keytabs/kafka_client.keytab”

principal=”kafka-client-1@EXAMPLE.COM”;

};

For command-line utilities like kafka-console-consumer or kafka-console-producer, kinit can be used along with useTicketCache=true as in:

KafkaClient {

com.sun.security.auth.module.Krb5LoginModule required

useTicketCache=true;

};

The security protocol and service name are set in producer.properties and/or consumer.properties. We also have to include the truststore details since we are using SASL_SSL instead of SASL_PLAINTEXT:

security.protocol=SASL_SSL

sasl.kerberos.service.name=kafka

ssl.truststore.location=/var/private/ssl/kafka.client.truststore.jks

ssl.truststore.password=test1234

# keystore configuration should not be needed, see note below

ssl.keystore.location=/var/private/ssl/kafka.client.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

The keystore configuration is needed in 0.9.0.0 due to a bug in Kafka, which has been fixed and will be included in 0.9.0.1.

Finally, we pass the name of the JAAS file as a JVM parameter to the client JVM:

-Djava.security.auth.login.config=/etc/kafka/kafka_client_jaas.conf

The keytabs configured in the kafka_client_jaas.conf must be readable by the operating system user who is starting kafka client.

Putting It All Together

We have created a Vagrant setup based on Centos 7.2 that includes a Kerberos server, Kafka and OpenJDK 1.8.0 to make it easier to test all the pieces together. Please install Vagrant and VirtualBox (if you haven’t already) and then:

-

Clone the git repository: git clone https://github.com/confluentinc/securing-kafka-blog

-

Change directory: cd securing-kafka-blog

-

Start and provision the vagrant environment: vagrant up

-

Connect to the VM via SSH: vagrant ssh

ZooKeeper and Broker

Before we start ZooKeeper and the Kafka broker, let’s take a look at their config files:

/etc/kafka/zookeeper.properties

dataDir=/var/lib/zookeeper

clientPort=2181

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

requireClientAuthScheme=sasl

jaasLoginRenew=3600000

/etc/kafka/zookeeper_jaas.conf

Server {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab=”/etc/security/keytabs/zookeeper.keytab”

storeKey=true

useTicketCache=false

principal=”zookeeper/kafka.example.com@EXAMPLE.COM”;

};

/etc/kafka/server.properties

broker.id=0

listeners=SSL://:9093,SASL_SSL://:9095

security.inter.broker.protocol=SSL

zookeeper.connect=kafka.example.com:2181

log.dirs=/var/lib/kafka

zookeeper.set.acl=true

ssl.client.auth=required

ssl.keystore.location=/etc/security/tls/kafka.server.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

ssl.truststore.location=/etc/security/tls/kafka.server.truststore.jks

ssl.truststore.password=test1234

sasl.kerberos.service.name=kafka

authorizer.class.name=kafka.security.auth.SimpleAclAuthorizer

super.users=User:CN=kafka.example.com,OU=,O=Confluent,L=London,ST=London,C=GB

/etc/kafka/kafka_server_jaas.conf

KafkaServer {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab=”/etc/security/keytabs/kafka.keytab”

principal=”kafka/kafka.example.com@EXAMPLE.COM”;

};

Client {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab=”/etc/security/keytabs/kafka.keytab”

principal=”kafka/kafka.example.com@EXAMPLE.COM”;

};

And the start script (/usr/sbin/start-zk-and-kafka) looks like:

export KAFKA_HEAP_OPTS=’-Xmx256M’

export KAFKA_OPTS=’-Djava.security.auth.login.config=/etc/kafka/zookeeper_jaas.conf’

/usr/bin/zookeeper-server-start /etc/kafka/zookeeper.properties &

sleep 5

export KAFKA_OPTS=’-Djava.security.auth.login.config=/etc/kafka/kafka_server_jaas.conf’

/usr/bin/kafka-server-start /etc/kafka/server.properties &

After executing executing the script (sudo /usr/sbin/start-zk-and-kafka), the following should be in the server.log:

INFO Registered broker 0 at path /brokers/ids/0 with addresses: SSL -> EndPoint(kafka.example.com,9093,SSL),SASL_SSL -> EndPoint(kafka.example.com,9095,SASL_SSL)

Create topic

Because we configured ZooKeeper to require SASL authentication, we need to set the java.security.auth.login.config system property while starting the kafka-topics tool:

export KAFKA_OPTS=”-Djava.security.auth.login.config=/etc/kafka/kafka_server_jaas.conf”

kafka-topics –create –topic securing-kafka –replication-factor 1 –partitions 3 –zookeeper kafka.example.com:2181

We used the server principal and keytab for this example, but you may want to create a separate principal and keytab for tools such as this.

Set ACLs

We authorize the clients to access the newly created topic:

export KAFKA_OPTS=”-Djava.security.auth.login.config=/etc/kafka/kafka_server_jaas.conf”

kafka-acls –authorizer-properties zookeeper.connect=kafka.example.com:2181 \

–add –allow-principal User:kafkaclient \

–producer –topic securing-kafka

kafka-acls –authorizer-properties zookeeper.connect=kafka.example.com:2181 \

–add –allow-principal User:kafkaclient \

–consumer –topic securing-kafka –group securing-kafka-group

Console Clients

The client configuration is slightly different depending on whether we want the client to use TLS or SASL/Kerberos.

TLS

The console producer is a convenient way to send a small amount of data to the broker:

kafka-console-producer –broker-list kafka.example.com:9093 –topic securing-kafka –producer.config /etc/kafka/producer_ssl.properties

message1

message2

message3

producer_ssl.properties

bootstrap.servers=kafka.example.com:9093

security.protocol=SSL

ssl.truststore.location=/etc/security/tls/kafka.client.truststore.jks

ssl.truststore.password=test1234

ssl.keystore.location=/etc/security/tls/kafka.client.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

The console consumer is a convenient way to consume messages:

kafka-console-consumer –bootstrap-server kafka.example.com:9093 –topic securing-kafka –new-consumer –from-beginning –consumer.config /etc/kafka/consumer_ssl.properties

consumer_ssl.properties

bootstrap.servers=kafka.example.com:9093

group.id=securing-kafka-group

security.protocol=SSL

ssl.truststore.location=/etc/security/tls/kafka.client.truststore.jks

ssl.truststore.password=test1234

ssl.keystore.location=/etc/security/tls/kafka.client.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

The output should be:

message1

message2

message3

SASL/Kerberos

Using the console clients via SASL/Kerberos is similar, but we also need to pass the location of the JAAS file via a system property. An example for the console consumer follows:

export KAFKA_OPTS=”-Djava.security.auth.login.config=/etc/kafka/kafka_client_jaas.conf”

kafka-console-consumer –bootstrap-server kafka.example.com:9095 –topic securing-kafka –new-consumer –from-beginning –consumer.config /etc/kafka/consumer_sasl.properties

consumer_sasl.properties

bootstrap.servers=kafka.example.com:9095

group.id=securing-kafka-group

security.protocol=SASL_SSL

sasl.kerberos.service.name=kafka

ssl.truststore.location=/etc/security/tls/kafka.client.truststore.jks

ssl.truststore.password=test1234

ssl.keystore.location=/etc/security/tls/kafka.client.keystore.jks

ssl.keystore.password=test1234

ssl.key.password=test1234

Java Clients

Configuring the new Java clients (both producer and consumer) is simply a matter of passing the configs used in the “Console Clients” example above to the relevant client constructor. This can be done by either loading the properties from a file or by setting the properties programmatically. An example of setting the properties programmatically for a consumer configured to use TLS follows:

Properties props = new Properties();

props.setProperty(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, “localhost:9093”);

props.setProperty(ConsumerConfig.GROUP_ID_CONFIG, “securing-kafka-group”);

props.setProperty(CommonClientConfigs.SECURITY_PROTOCOL_CONFIG, “SSL”);

props.setProperty(SslConfigs.SSL_TRUSTSTORE_LOCATION_CONFIG, “/etc/security/tls/kafka.client.truststore.jks”);

props.setProperty(SslConfigs.SSL_TRUSTSTORE_PASSWORD_CONFIG, “test1234”);

props.setProperty(SslConfigs.SSL_KEYSTORE_PASSWORD_CONFIG, “/etc/security/tls/kafka.client.keystore.jks”);

props.setProperty(SslConfigs.SSL_KEYSTORE_LOCATION_CONFIG, “test1234”);

props.setProperty(SslConfigs.SSL_KEY_PASSWORD_CONFIG, “test1234”);

new KafkaConsumer(props);

Debugging problems

It is a common occurrence that things don’t work on the first attempt when it comes to configuring security systems. Debugging output can be quite helpful in order to diagnose the cause of the problem:

-

SSL debug output can be enabled via the the javax.net.debug system property, eg export KAFKA_OPTS=-Djavax.net.debug=all

-

SASL debug output can be enabled via the sun.security.krb5.debug system property, eg export KAFKA_OPTS=-Dsun.security.krb5.debug=true

-

Kafka authentication logging can be enabled by changing WARN to DEBUG in the following line of the log4j.properties file included in the Kafka distribution (in /etc/kafka/log4j.properties in the Confluent Platform):

log4j.logger.kafka.authorizer.logger=WARN, authorizerAppender

Conclusion

We have shown how the security features introduced in Apache Kafka 0.9 (part of Confluent Platform 2.0) can be used to secure a Kafka cluster. We focused on ensuring that authentication was required for all network communication and network encryption was applied to all broker/client and inter-broker network traffic.

These security features were a very good first step, but we will be making them better, faster and simpler to operate in subsequent releases. As ever, we would love to hear your feedback in the Kafka mailing list or Confluent Platform Google Group.

Acknowledgements

We would like to thank Ben Stopford, Flavio Junqueira, Gwen Shapira, Joel Koshy, Jun Rao, Parth Brahmbhatt, Rajini Sivaram, and Sriharsha Chintalapani for their contributions to the security features introduced in Apache Kafka 0.9, and our colleagues at Confluent for feedback on the draft version of this post.

Did you like this blog post? Share it now

Subscribe to the Confluent blog

New with Confluent Platform 7.9: Oracle XStream CDC Connector, Client-Side Field Level Encryption (EA), Confluent for VS Code, and More

This blog announces the general availability of Confluent Platform 7.9 and its latest key features: Oracle XStream CDC Connector, Client-Side Field Level Encryption (EA), Confluent for VS Code, and more.

Meet the Oracle XStream CDC Source Connector

Confluent's new Oracle XStream CDC Premium Connector delivers enterprise-grade performance with 2-3x throughput improvement over traditional approaches, eliminates costly Oracle GoldenGate licensing requirements, and seamlessly integrates with 120+ connectors...