[Virtual Event] GenAI Streamposium: Learn to Build & Scale Real-Time GenAI Apps | Register Now

Apache Kafka

Data Products, Data Contracts, and Change Data Capture

Change data capture is a popular method to connect database tables to data streams, but it comes with drawbacks. The next evolution of the CDC pattern, first-class data products, provide resilient pipelines that support both real-time and batch processing while isolating upstream systems...

Unlock Cost Savings with Freight Clusters–Now in General Availability

Confluent Cloud Freight clusters are now Generally Available on AWS. In this blog, learn how Freight clusters can save you up to 90% at GBps+ scale.

Contributing to Apache Kafka®: How to Write a KIP

Learn how to contribute to open source Apache Kafka by writing Kafka Improvement Proposals (KIPs) that solve problems and add features! Read on for real examples.

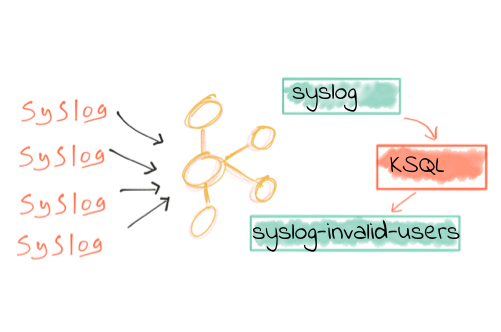

We ❤ syslogs: Real-time syslog Processing with Apache Kafka and KSQL – Part 2: Event-Driven Alerting with Slack

In the previous article, we saw how syslog data can be easily streamed into Apache Kafka® and filtered in real time with KSQL. In this article, we’re going to see how […]

No More Silos: How to Integrate Your Databases with Apache Kafka and CDC

One of the most frequent questions and topics that I see come up on community resources such as StackOverflow, the Confluent Platform mailing list, and the Confluent Community Slack group, […]

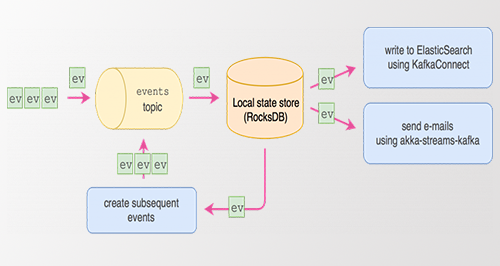

Event Sourcing Using Apache Kafka

Adam Warski is one of the co-founders of SoftwareMill, where he codes mainly using Scala and other interesting technologies. He is involved in open-source projects, such as sttp, MacWire, Quicklens, […]

Should You Put Several Event Types in the Same Kafka Topic?

If you adopt a streaming platform such as Apache Kafka, one of the most important questions to answer is: what topics are you going to use? In particular, if you […]

Handling GDPR with Apache Kafka: How does a log forget?

If you follow the press around Apache Kafka you’ll probably know it’s pretty good at tracking and retaining messages, but sometimes removing messages is important too. GDPR is a good […]

Toward a Functional Programming Analogy for Microservices

Microservices are all the rage these days. Passionate, thoughtful advocates and detractors present compelling arguments for and against the architectural style. Usually, these arguments boil down to whether organizations should adopt, refrain from, or abandon microservices...

Apache Kafka Goes 1.0

It has been seven years since we first set out to create the distributed streaming platform we know now as Apache Kafka®. Born initially as a highly scalable messaging system, […]

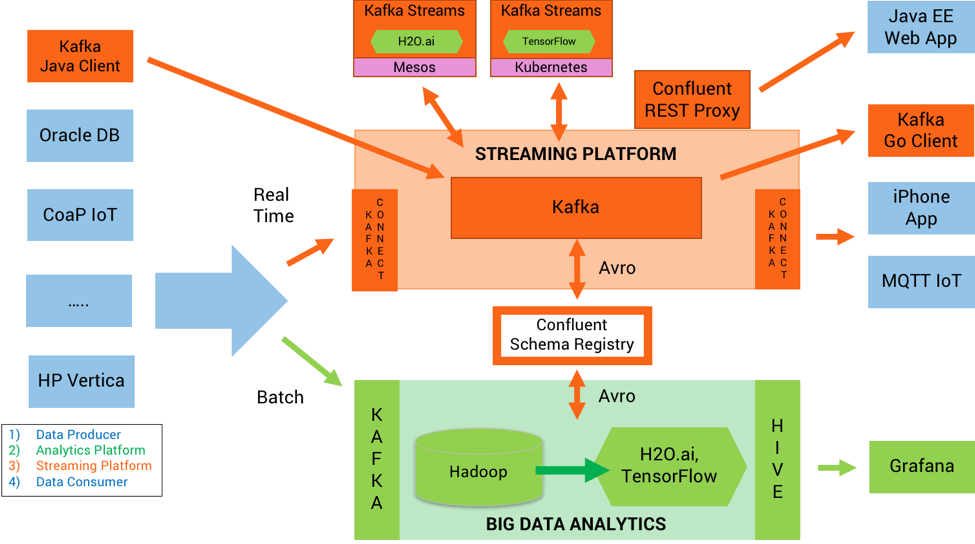

How to Build and Deploy Scalable Machine Learning in Production with Apache Kafka

Scalable Machine Learning in Production with Apache Kafka® Intelligent real time applications are a game changer in any industry. Machine learning and its sub-topic, deep learning, are gaining momentum because […]

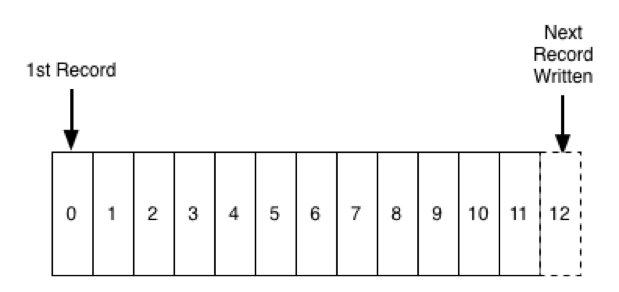

It's Okay To Store Data In Kafka

A question people often ask about Apache Kafka® is whether it is okay to use it for longer term storage. Kafka, as you might know, stores a log of records, […]

How Apache Kafka is Tested

Introduction Apache Kafka® is used in thousands of companies, including some of the most demanding, large scale, and critical systems in the world. Its largest users run Kafka across thousands […]

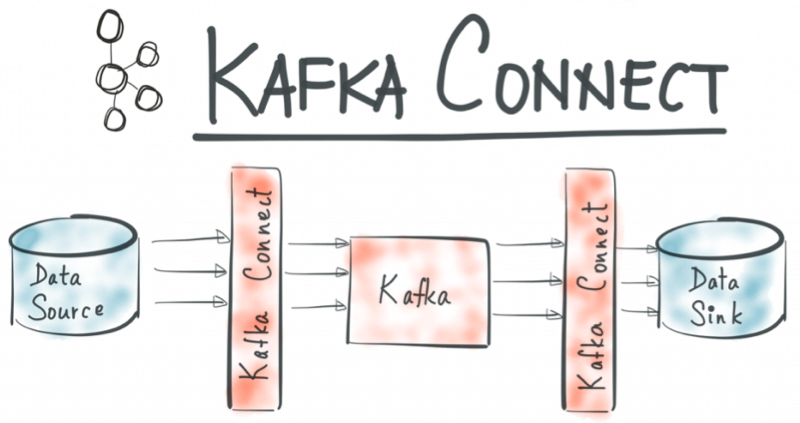

The Simplest Useful Kafka Connect Data Pipeline in the World…or Thereabouts – Part 3

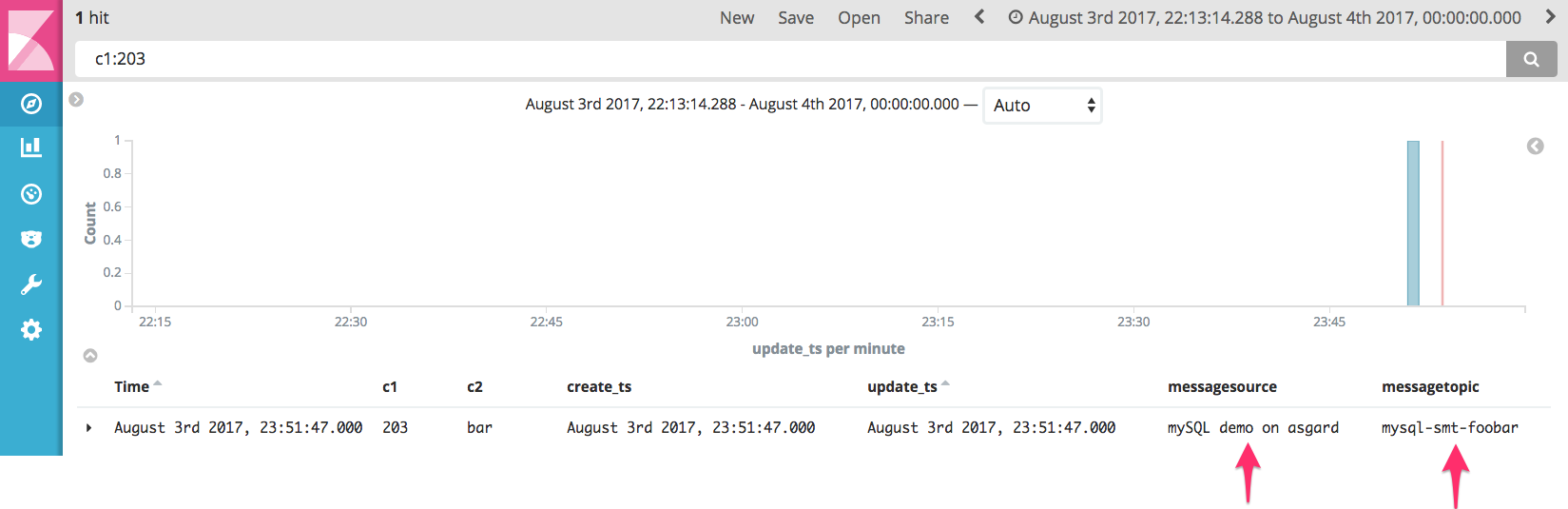

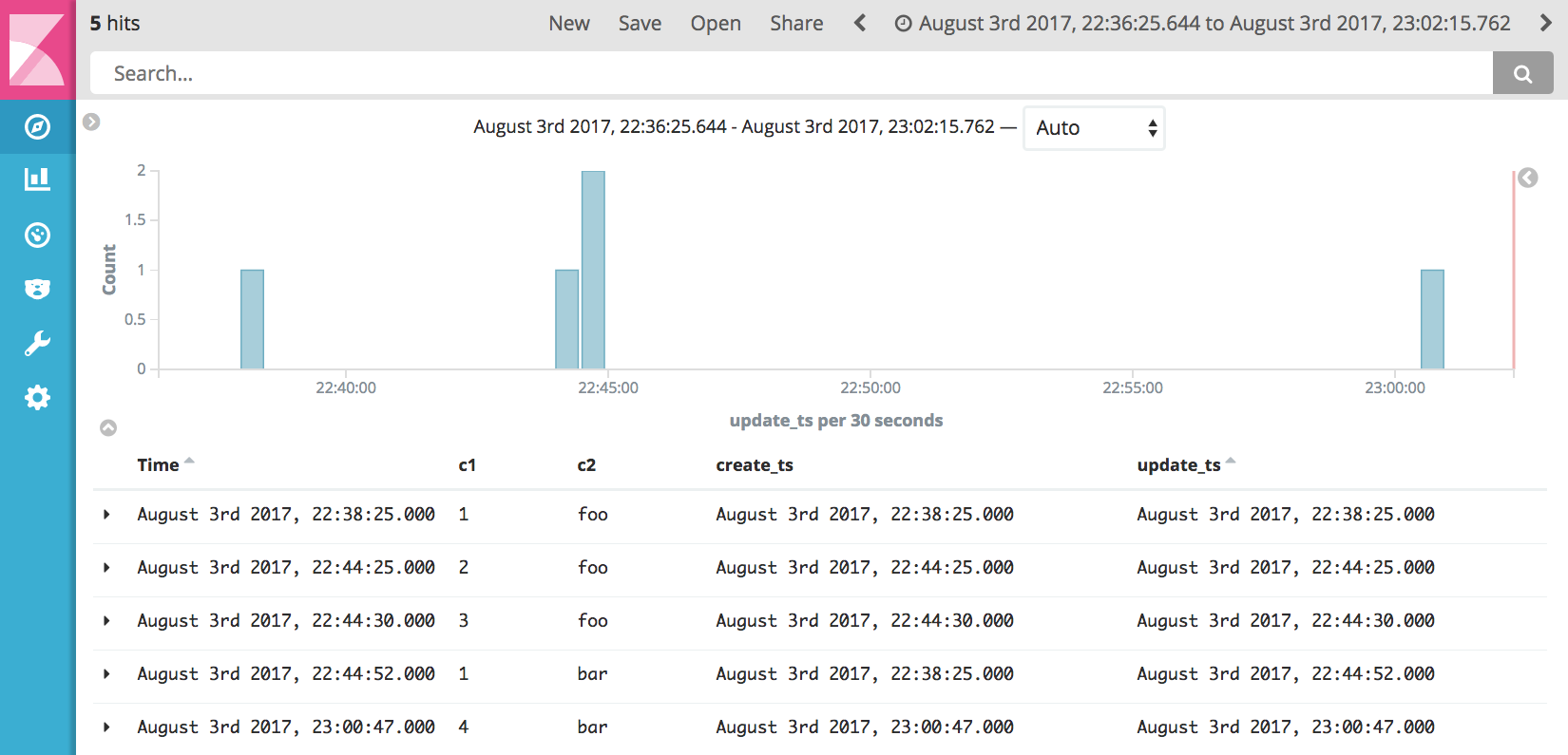

We saw in the earlier articles (part 1, part 2) in this series how to use the Kafka Connect API to build out a very simple, but powerful and scalable, streaming […]

The Simplest Useful Kafka Connect Data Pipeline in the World…or Thereabouts – Part 2

In the previous article in this blog series I showed how easy it is to stream data out of a database into Apache Kafka®, using the Kafka Connect API. I […]

The Simplest Useful Kafka Connect Data Pipeline in the World…or Thereabouts – Part 1

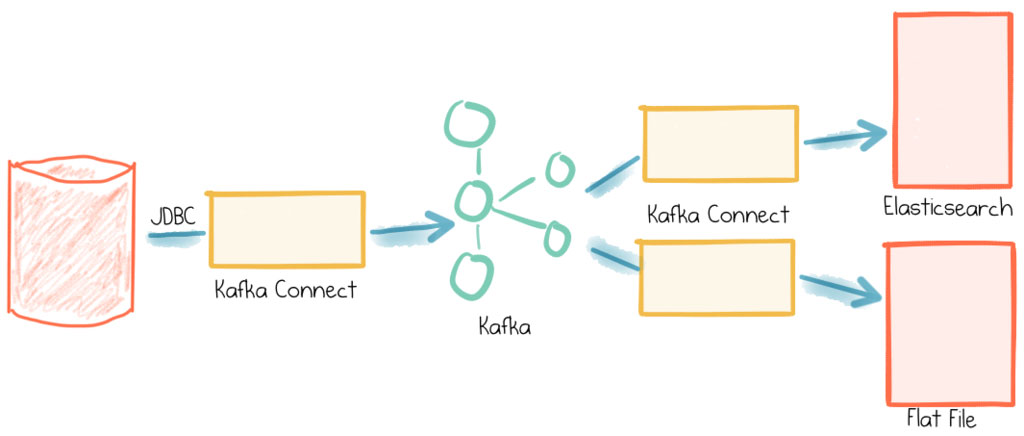

This short series of articles is going to show you how to stream data from a database (MySQL) into Apache Kafka® and from Kafka into both a text file and Elasticsearch—all […]

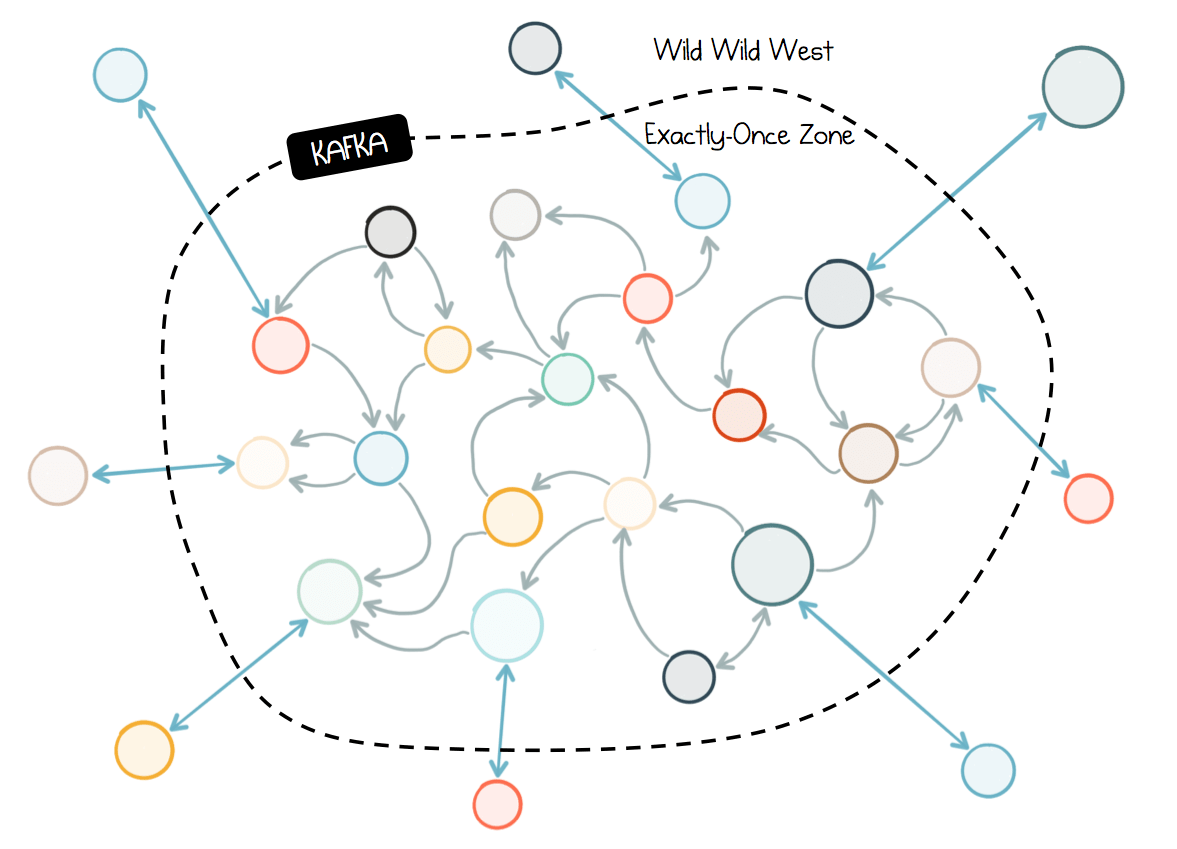

Chain Services with Exactly-Once Guarantees

This fourth post in the microservices series looks at how we can sew together complex chains of services, efficiently, and accurately, using Apache Kafka’s Exactly-Once guarantees. Duplicates, Duplicates Everywhere Any […]